Maybe it is just me.

Since Google was promoting everyone to make their sites mobile friendly, many pages are a pain to read now on a regular laptop or monitor.

Example SEO Blog: moz.com/blog

Take the moz blog, for example. Great on mobile, not good to read on a desktop. HUGE image up front, pushing all content below the fold. That's just not good, not for SEO, and not for usability.

Desktop design due to Google push? Image is all I see on the screenshot 14" full laptop screen.

Example News blog: Search Engine Land

Similar issue. I do NOT like headlines that big, really.

Desktop design due to Google push? On my screenshot 14" full laptop screen I see a lot of headline, and... Ads.

Now, lets look at a few more.

Amazon has a different url concept (in parts, at least). Mobile experience is not that great, and if I hit the back button, I get the desktop homepage on phone screen, I had hoped for something different.

Bestbuy is not really different - showing off their headline :-). The responsive seems not optimized, at least not for me.

Dell? We have some great pages, some ok pages (many on separate url on m.dell.com) and some definitely having room for improvement when it comes to mobile / responsive - and we have lots of teams working on improving that, both some different url country setups, and some responsive ( http://www.dell.com/en-us/work/learn/large-enterprise-solutions for example). But responsive lends itself to some content like consulting service description, and not to other content like picking a laptop out of a larger selection.

Many sites need to serve content to mobile users much better than they currently do, and Google's push is a good reminder. I do think they have gone a bit far, and I am not sure they are aware that other industries have different needs. Google has an easy play. Their content and searching lends itself to responsive design but it is not really fair to compare search and search results to news, magazines and eCommerce sites. They all have much more complex processes users need to go through, and they have other business models than exploitation of personal data.

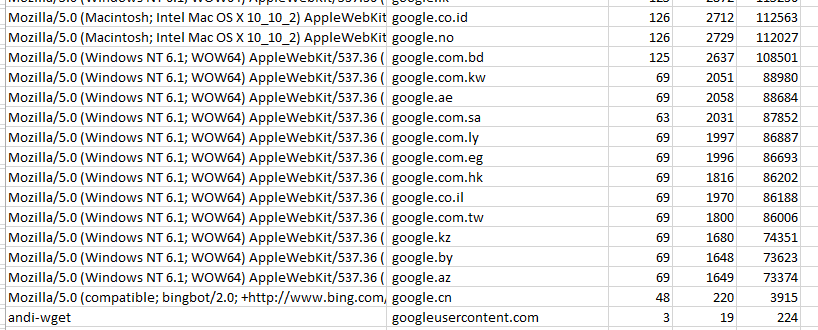

Does not Google's data actually confirm that there are industries where mobile is really important and some where it is not? Showing data from Google adwords tool, just random terms used to see some variance:

Not really a big surprise when you think about it. And even the keyword '

search' has 51% of searchers using a pc / monitor, not a phone or tablet, according to Adwords data. I understand the search algorithm change was not large, but it all of the above indicates, it should really not be more either. Many industries don't have that much mobile share, and responsive does not work well for complex tasks. It can be made to work well with adaptive and even better separate urls, layout, but that is slow, complex and expensive, so it takes even more mobile share to justify the investment.

But maybe I am totally wrong because I just have not seen the great examples out there.

Do you have an example of a site with complex task that really works well on phone + monitor in a responsive layout?